Orchestrating a

Virtual Hub in Go

Container · VM · Network · VPN — a single binary

Ahmet Türkmen

Systems Development Engineer — AWS

Gophers Istanbul · 2026

$ whoami

Ahmet Türkmen

Systems Development Engineer — AWS

Infrastructure, automation, distributed systems

Building daily tools and services with Go

Container, VM and network automation

Agenda (~45 min)

- Why Go? — Advantages of Go for orchestration

- Go ecosystem — From Kubernetes to Terraform

- Architecture overview

- net Go's networking superpower

- goroutine Cheap parallelism with fan-out

- channel Safe communication and error collection

- os/exec Infrastructure compatibility — virsh, crypto, static binary

- sync Thread-safe data structures

- Demo & Q&A

1 — Why Go?

Choosing Go for orchestration software

Demo / Lab Environments Are Hard

What Do We Want?

$ orchestrator up -c config.yaml

# → Docker network created (172.19.5.0/24)

# → DHCP & DNS containers running

# → nginx, whoami containers running

# → Debian VM booted with cloud-init

# → WireGuard client config generated

$ orchestrator status # check status

$ orchestrator ssh demo-vm # auto SSH into VM

$ orchestrator down # cleanupSingle binary, zero dependencies, infrastructure defined in YAML.

2 — Go Ecosystem

The de facto language of cloud infrastructure

Critical Projects Written in Go

| Project | Domain | Why Go? |

|---|---|---|

| Kubernetes | Orchestration | Managing thousands of pods with goroutines |

| Docker / containerd | Container | Direct access to OS syscalls |

| Terraform | IaC | Plugin architecture, static binary distribution |

| Prometheus | Monitoring | High throughput metric collection |

| etcd | KV Store | Raft consensus, net/rpc |

| CockroachDB | Database | Distributed SQL, coordination via channels |

| Caddy | Web Server | Automatic TLS, net/http stdlib |

| CoreDNS | DNS | Plugin chain architecture |

Kubernetes Is Not Always the Answer

- Sometimes VM + container together on a single server is needed

- Kubernetes cannot directly manage

virshdomains - ~15 MB Go binary vs. a full k8s cluster

- Tailor-made for edge / lab / demo scenarios

# Single command build — no dependencies

$ go build -o orchestrator .

$ ls -lh orchestrator

-rwxr-xr-x 1 user user 14M Feb 26 20:00 orchestrator

# Cross-compile to any platform

$ GOOS=linux GOARCH=arm64 go build -o orchestrator-arm .3 — Architecture Overview

Package Structure

orchestrator/

├── main.go # entry point

├── cmd/ # CLI commands (cobra)

│ ├── root.go # flags, log settings

│ ├── up.go / down.go # lifecycle

│ ├── ssh.go # interactive SSH into VM

│ └── log_hook.go # goroutine-ID logging

├── config/ # YAML parser

├── orch/ # core orchestrator

│ ├── orchestrator.go # Up / Down / Status

│ ├── dockerctl/ # Docker API wrapper

│ ├── libvirtctl/ # virsh CLI wrapper

│ ├── imagebuilder/ # custom VM image builder

│ └── ipool/ # thread-safe IP pool

└── wg/ # WireGuard config generatorComponent Flow

up · down · status · ssh

config · state · error aggregation

Docker API

container · network

DHCP · DNS

virsh CLI

VM · cloud-init

KVM / QEMU

IP allocation

/8 → /30

thread-safe

WireGuard

X25519 key

config generation

Each box is an independent Go package — explicit dependencies, easy testing.

net Go's Networking Superpower

IPv4 arithmetic, CIDR parsing, broadcast calculation

What Is CIDR?

Where is it used? Network segmentation, IP pool management, DHCP range definition, firewall rules, routing tables — the fundamental building block of network programming.

net.ParseCIDR — Understanding the Subnet

📁 orch/ipool/ipool.go — NewIPPool()

func NewIPPool(cidr string) (*IPPool, error) {

ip, ipnet, err := net.ParseCIDR(cidr)

if err != nil {

return nil, err

}

ip4 := ip.To4()

if ip4 == nil {

return nil, errors.New("only IPv4 addresses are supported")

}

ones, bits := ipnet.Mask.Size()

if bits != 32 || ones > 30 {

return nil, errors.New("only IPv4 with prefix length /8 to /30 is supported")

}

netIP := ipnet.IP.To4()

base := ipToUint32(netIP) // network address → uint32

bcast := base | ^maskToUint32(ipnet.Mask) // broadcast calculation

start := base + 30 // first 29 IPs reserved for infrastructure

end := bcast - 1 // last usable host

// ...

}net.ParseCIDR("172.19.5.0/24") → returns three values:1. IP address (

172.19.5.0),

2. Network info — network address + mask (172.19.5.0/24),

3. Error (if CIDR is invalid).All subnet information in a single line.

IPv4 = uint32 — Key Insight

📁 orch/ipool/ipool.go — helper functions

// Every IPv4 address is actually a 32-bit integer

func ipToUint32(ip net.IP) uint32 {

return binary.BigEndian.Uint32(ip.To4())

}

func uint32ToIP(n uint32) net.IP {

ip := make(net.IP, 4)

binary.BigEndian.PutUint32(ip, n)

return ip

}

func maskToUint32(m net.IPMask) uint32 {

return binary.BigEndian.Uint32(m)

}10.42.0.0 → 175_046_656 |

10.42.255.255 → 175_112_191Is the address between these two numbers? → A single

if is enough.

Broadcast Calculation — Real Code

📁 orch/dockerctl/client.go — EnsureDHCPAndDNS()

_, ipNet, err := net.ParseCIDR(pool.Subnet())

// ...

netIP := ipNet.IP.To4()

mask := ipNet.Mask

// Broadcast = network address OR (NOT mask)

broadcastBytes := make([]byte, 4)

for i := 0; i < 4; i++ {

broadcastBytes[i] = netIP[i] | ^mask[i]

}

broadcast := net.IP(broadcastBytes).String()

// Generate DHCP configuration from subnet info

maskStr := fmt.Sprintf("%d.%d.%d.%d", mask[0], mask[1], mask[2], mask[3])

dhcpIP := pool.FormatIP(2) // x.x.x.2 → DHCP server

dnsIP := pool.FormatIP(3) // x.x.x.3 → DNS server

minRange := pool.FormatIP(4) // x.x.x.4 → DHCP range start

maxRange := pool.FormatIP(pool.BroadcastOffset() - 1)1. Calculate broadcast →

172.19.5.255

2. Mask → 255.255.255.0

3. Static IPs: .2 = DHCP, .3 = DNS

4. Range: .4 – .254 for clientsWith Go's

net + encoding/binary — zero external libraries.

DHCP Config Generation — fmt.Sprintf as Template

📁 orch/dockerctl/client.go

dhcpConf := fmt.Sprintf(`default-lease-time 600;

max-lease-time 7200;

authoritative;

option domain-name-servers %s;

option routers %s;

subnet %s netmask %s {

range %s %s;

option subnet-mask %s;

option broadcast-address %s;

}

`, dnsIP, gatewayIP, networkAddr, maskStr,

minRange, maxRange, maskStr, broadcast)

if err := os.WriteFile(dhcpConfPath, []byte(dhcpConf), 0o644); err != nil {

return "", "", "", err

}No external template engine — Go's backtick strings +

fmt.Sprintf are sufficient.

goroutine Cheap Parallelism

Provisioning containers + VMs concurrently

Why Goroutines?

10,000 goroutines ≈ 20 MB. 10,000 threads ≈ 10 GB.

Two-Level Fan-Out — Real Code

📁 orch/orchestrator.go — Up() method

// ── Start containers and VMs concurrently ──

var (

topWG sync.WaitGroup

topErrs []error

topMu sync.Mutex // protects topErrs

)

// Level 1: Containers ‖ VMs (two top-level goroutines)

topWG.Add(1)

go func() { // ── Container supervisor ──

defer topWG.Done()

var wg sync.WaitGroup

errChan := make(chan error, len(o.cfg.Containers))

for _, c := range o.cfg.Containers {

wg.Add(1)

go func(container config.ContainerCfg) { // Level 2: each container

defer wg.Done()

// Allocate IP, start container...

}(c)

}

wg.Wait()

close(errChan)

// collect errors → topErrs

}()

topWG.Add(1)

go func() { // ── VM supervisor ──

defer topWG.Done()

// same pattern: goroutine for each VM

}()

topWG.Wait() // barrier — wait until all finishGoroutine Inside Goroutine — Detail

📁 orch/orchestrator.go — container goroutines

for _, c := range o.cfg.Containers {

wg.Add(1)

go func(container config.ContainerCfg) {

defer wg.Done()

entry := o.log.WithField("container", container.Name)

// Reserve static IP if set, otherwise allocate randomly

var usedIP string

if container.IP != "" {

usedIP = container.IP

if err := o.pool.ReserveIP(usedIP); err != nil {

entry.WithError(err).Warn("could not reserve static IP")

}

} else {

ip, err := o.pool.RandomIP()

if err != nil {

errChan <- fmt.Errorf("IP allocation %s: %w", container.Name, err)

return

}

usedIP = ip

}

if err := o.dc.RunContainer(ctx, container.Name,

container.Image, container.Cmd, container.Env,

label, usedIP, netName); err != nil {

errChan <- fmt.Errorf("container %s: %w", container.Name, err)

return

}

entry.Info("container started")

}(c) // ← pass as parameter to avoid the closure trap

}What Is the Closure Trap?

go func() { ... }() inside a for loop,

the function binds to the outer c variable by reference ("closure").Problem: by the time the goroutine starts running, the loop has already advanced, and all goroutines see the last value — the wrong container gets started!

go func(container ContainerCfg) { ... }(c)When you pass the loop variable as a parameter, the current value is copied, and each goroutine works with its own independent copy.

Note: Go 1.22+ solves this automatically, but this pattern is still recommended for compatibility with older versions.

sync.WaitGroup — Barrier Pattern

main goroutine counter = 0

├── wg.Add(1) ──────────────counter = 1

│ └── go func A()

├── wg.Add(1) ──────────────counter = 2

│ └── go func B()

├── wg.Add(1) ──────────────counter = 3

│ └── go func C()

▼

wg.Wait() ◄── block ──────────counter > 0

· (A done → wg.Done()) ───counter = 2

· (C done → wg.Done()) ───counter = 1

· (B done → wg.Done()) ───counter = 0 ✓

▼

continue! ◄── barrier lifted

WaitGroup holds a counter.

Add(1) increments, Done() decrements, Wait() blocks

until it reaches zero.

var wg sync.WaitGroup

wg.Add(len(services)) // increment counter

for _, svc := range services {

go func() {

defer wg.Done() // decrement when done

// do work...

}()

}

wg.Wait() // wait until counter reaches 0channel Safe Communication

Error collection and synchronization between goroutines

Buffered Error Channel — Real Code

📁 orch/orchestrator.go

// Buffered channel: each goroutine sends its error without blocking

errChan := make(chan error, len(o.cfg.Containers))

go func(container config.ContainerCfg) {

defer wg.Done()

if err := o.dc.RunContainer(ctx, ...); err != nil {

errChan <- fmt.Errorf("container %s: %w", container.Name, err)

return // ← does not block because the channel is buffered

}

}(c)

// Close the channel after all goroutines finish

wg.Wait()

close(errChan)

// Collect all errors with range

var errs []error

for err := range errChan { // read until channel is closed

errs = append(errs, err)

}

if len(errs) > 0 {

return errors.Join(errs...) // Go 1.20+ — combine multiple errors

}Channel Flow — Visual

make(chan error, N), N goroutines can send errors

and none of them blocks. If unbuffered, each send would wait for a receiver.

select — Three-Way Wait

📁 orch/dockerctl/client.go — waitForRunning()

func (c *Client) waitForRunning(ctx context.Context,

containerID string, timeout time.Duration) error {

timer := time.NewTimer(timeout)

defer timer.Stop()

ticker := time.NewTicker(500 * time.Millisecond)

defer ticker.Stop()

for {

select {

case <-ctx.Done(): // ① cancellation

return ctx.Err()

case <-timer.C: // ② timeout

return errors.New("container start timeout")

case <-ticker.C: // ③ polling

ins, err := c.c.InspectContainer(containerID)

if err != nil { continue }

if ins.State.Running { return nil }

}

}

}select = Go's multiplexer. Listens to multiple channels simultaneously,

runs whichever branch is ready. Context cancellation comes for free.

DHCP + DNS — Parallel Service Start

📁 orch/dockerctl/client.go — EnsureDHCPAndDNS()

type serviceResult struct {

name string

id string

err error

}

results := make(chan serviceResult, len(services))

var wg sync.WaitGroup

wg.Add(len(services))

for _, svc := range services {

svcCopy := svc

go func() {

defer wg.Done()

id, svcErr := c.createStartAndWait(ctx, svcCopy.opts, 30*time.Second)

results <- serviceResult{name: svcCopy.name, id: id, err: svcErr}

}()

}

wg.Wait()

close(results)

// Collect results — separate successes and failures

for res := range results {

if res.err != nil {

errs = append(errs, fmt.Errorf("%s: %w", res.name, res.err))

}

}Sending structs over channels — carries rich result objects, not just errors.

os/exec Infrastructure Compatibility

virsh, filesystem, cryptography — all in stdlib

Why libvirt / virsh?

| Reason | Description |

|---|---|

| Abstraction | KVM, QEMU, Xen — single API, standard XML |

| No CGo | virsh CLI → static binary preserved, zero C dependency |

| Graceful fallback | /dev/kvm exists → type='kvm', otherwise type='qemu'

|

| Cloud-init | Mount ISO as CD-ROM → VM auto-configures on first boot |

| Hybrid network | VM + container on the same bridge network — real kernel isolation |

os/exec — Managing System Commands

📁 orch/libvirtctl/libvirt.go — DefineVM()

func (c *Client) DefineVM(name, imagePath, cloudInitISO,

network string, memoryMB, vcpus int) error {

// Build the XML template as a Go string

xml := fmt.Sprintf(`<domain type='%s'>

<name>%s</name>

<memory unit='MiB'>%d</memory>

<vcpu>%d</vcpu>

...

</domain>`, virtType(), name, memoryMB, vcpus, ...)

// Send XML to virsh via stdin

cmd := exec.Command("virsh", "define", "/dev/stdin")

cmd.Stdin = strings.NewReader(xml) // ← string → io.Reader

output, err := cmd.CombinedOutput()

if err != nil {

return fmt.Errorf("define VM: %w, output: %s", err, output)

}

return nil

}exec.Command + cmd.Stdin = data pipeline to external tool.

No temp files, no pipe management.

os.Stat — Environment Detection

📁 orch/libvirtctl/libvirt.go

// KVMAvailable — check hardware virtualization support

func KVMAvailable() bool {

_, err := os.Stat("/dev/kvm")

return err == nil // file exists → KVM is available

}

// Automatic strategy selection based on environment

func virtType() string {

if KVMAvailable() {

return "kvm" // hardware acceleration ✓

}

return "qemu" // software emulation (e.g., AWS EC2)

}📁 orch/orchestrator.go — cloud-init ISO detection

// Check if file exists — skip CD-ROM if not

cloudInitISO := filepath.Join(

filepath.Dir(vm.Image), "..", "cloud-init", "cloud-init.iso",

)

if _, err := os.Stat(cloudInitISO); err != nil {

cloudInitISO = "" // cloud-init not found → skip CD-ROM

}/dev/kvm — your tool should

fall back gracefully instead of crashing.

crypto — Pure Go WireGuard Keys

📁 wg/wg.go — GenerateClientConfig()

func GenerateClientConfig(peerName, address string) error {

// Generate 32 random bytes — Go's crypto/rand package

var priv [32]byte

if _, err := rand.Read(priv[:]); err != nil {

return fmt.Errorf("random read: %w", err)

}

// Clamp per RFC 7748 — required for X25519

priv[0] &= 248

priv[31] &= 127

priv[31] |= 64

// Compute public key — pure Go, no CGo, no wg CLI

var pub [32]byte

curve25519.ScalarBaseMult(&pub, &priv)

privEncoded := base64.StdEncoding.EncodeToString(priv[:])

pubEncoded := base64.StdEncoding.EncodeToString(pub[:])

// Write config file — with 0600 permissions

return os.WriteFile(

fmt.Sprintf("wg-client-%s.conf", peerName),

[]byte(conf), 0600)

}crypto/rand + golang.org/x/crypto/curve25519 =

VPN key generation without external tools. Everything included in the static binary.

fmt.Errorf("%w") — Error Wrapping Chain

📁 Pattern used across all packages

// Add context at each layer

if err := o.dc.CreateNetwork(name, subnet, driver); err != nil {

return fmt.Errorf("create network: %w", err)

}

// Caller can inspect the error chain

if errors.Is(err, os.ErrPermission) {

// permission error — special handling

}

// Unwrap to a specific type

var netErr *net.OpError

if errors.As(err, &netErr) {

// network error — special handling

}

// Go 1.20+: combine multiple errors

return errors.Join(err1, err2, err3)Each error carries the story of the call stack:

"provision failed: container nginx: connect to network: connection refused"

sync Thread-Safe Data Structures

Mutex-protected IP pool, dual strategy

sync.Mutex — Protecting the IP Pool

📁 orch/ipool/ipool.go

type IPPool struct {

subnet string

base uint32

bcast uint32

start uint32

end uint32

mutex sync.Mutex // ← serializes all access

avail map[uint32]struct{} // small subnet (≤1024): ready set

used map[uint32]struct{} // large subnet: only used ones

lazy bool // true if host count > 1024

rand *rand.Rand

}

func (p *IPPool) RandomIP() (string, error) {

p.mutex.Lock() // lock

defer p.mutex.Unlock() // release lock no matter what

if p.lazy {

return p.randomIPLazy() // large pool: random trial

}

return p.randomIPEager() // small pool: pick from ready set

}Dual Strategy — Why?

Eager: ≤1024 hosts → fill all IPs into a

map, allocate = delete.Lazy: >1024 hosts → only write used ones, generate randomly, check.

Adapt the data structure to the problem.

| CIDR | Host Count | Naive map approach | Strategy |

|---|---|---|---|

| 192.168.1.0/24 | 224 | ✅ no problem | Eager (ready set) |

| 10.42.0.0/16 | 65,505 | ⚠️ 65K entries | Lazy (trial-and-error) |

| 10.0.0.0/8 | 16,777,185 | ❌ 5 sec, ~1 GB | Lazy (trial-and-error) |

Lazy Strategy — Code

📁 orch/ipool/ipool.go — randomIPLazy()

// Lazy strategy: start random, linear scan

func (p *IPPool) randomIPLazy() (string, error) {

total := p.end - p.start + 1

if uint32(len(p.used)) >= total {

return "", errors.New("no IPs left")

}

offset := uint32(p.rand.Int63n(int64(total)))

for i := uint32(0); i < total; i++ {

candidate := p.start + (offset+i)%total

if _, taken := p.used[candidate]; !taken {

p.used[candidate] = struct{}{}

return uint32ToIP(candidate).String(), nil

}

}

return "", errors.New("no IPs left")

}Concurrency Test — 100 Goroutines

📁 orch/ipool/ipool_test.go

func TestPool_ConcurrentAccess(t *testing.T) {

p, err := NewIPPool("172.18.5.0/24")

if err != nil {

t.Fatalf("create pool: %v", err)

}

var wg sync.WaitGroup

errs := make(chan error, 100)

for i := 0; i < 100; i++ {

wg.Add(1)

go func() {

defer wg.Done()

ip, err := p.RandomIP() // concurrent allocation

if err != nil {

errs <- err

return

}

if err := p.ReleaseIP(ip); err != nil { // concurrent release

errs <- err

}

}()

}

wg.Wait()

close(errs)

for err := range errs {

t.Errorf("concurrency error: %v", err)

}

}go test -race ./orch/ipool/ — validate with the race detector.

Bonus: Logging with Goroutine-ID

Observing parallel execution

Extracting Goroutine ID with runtime.Stack

📁 cmd/log_hook.go

// goroutineHook — hook that adds goroutine ID to logrus entries

type goroutineHook struct{}

func (h *goroutineHook) Levels() []log.Level { return log.AllLevels }

func (h *goroutineHook) Fire(entry *log.Entry) error {

entry.Data["goroutine"] = goID()

return nil

}

func goID() string {

var buf [64]byte

// runtime.Stack writes: "goroutine 42 [running]:\n..."

n := runtime.Stack(buf[:], false)

s := string(buf[:n])

const prefix = "goroutine "

s = s[len(prefix):]

for i := 0; i < len(s); i++ {

if s[i] == ' ' {

if _, err := strconv.Atoi(s[:i]); err == nil {

return s[:i] // "42"

}

break

}

}

return "?"

}Log Output — Proof of Parallelism

INFO[0001] starting container container=nginx goroutine=34

INFO[0001] defining VM vm=demo-vm goroutine=38

INFO[0001] starting container container=whoami goroutine=36

INFO[0002] container started container=nginx goroutine=34

INFO[0002] starting VM vm=demo-vm goroutine=38

INFO[0003] container started container=whoami goroutine=36

INFO[0004] VM started vm=demo-vm goroutine=38📁 cmd/root.go — register hook

RootCmd.PersistentPreRunE = func(cmd *cobra.Command, args []string) error {

lvl, _ := log.ParseLevel(logLevel)

log.SetLevel(lvl)

log.SetFormatter(&log.TextFormatter{FullTimestamp: true})

log.AddHook(&goroutineHook{}) // ← adds goroutine ID to every log line

return nil

}Demo

Let's see it all together 🚀

Demo Flow

# Single command build

$ go build -o orchestrator .

# Bring up the environment

$ sudo ./orchestrator up -c config/example.yaml --log-level debug

# Check status

$ sudo ./orchestrator status

# SSH into VM — IP auto-detected

$ sudo ./orchestrator ssh demo-vm

# Clean everything up

$ sudo ./orchestrator downWhat happens during orchestrator up?

1. Parse YAML configuration

2. Create Docker bridge network (172.19.5.0/24)

3. Initialize IP Pool (/8 → /30 support)

4. Reserve DHCP (.2) and DNS (.3) IPs

5. Start DHCP + DNS containers (parallel)

6. ┌── Start containers (parallel) ← goroutine

│ ├── nginx:alpine → 172.19.5.10

│ └── whoami → 172.19.5.11

└── Start VMs (parallel) ← goroutine

└── demo-vm → assigned via DHCP

7. Generate WireGuard client config ← crypto

8. Write state file (JSON)Declarative Configuration

network_name: demo-net

subnet: 172.19.5.0/24

network_type: bridge

containers:

- name: web-demo

image: nginx:alpine

ip: 172.19.5.10

- name: whoami

image: containous/whoami:latest

ip: 172.19.5.11

vms:

- name: demo-vm

image: ./images/debian-12.qcow2

memory_mb: 1024

vcpus: 2

packages: [curl, wget, vim, htop, net-tools, nmap]

wireguard:

enabled: true

peer_name: demo-client

address: 10.10.0.2/24Takeaways

What Go Brings to Infrastructure

| Go Feature | Usage in Project | Alternative Cost |

|---|---|---|

| net | CIDR parsing, IP arithmetic, broadcast | Python: ipaddress + 3rd party |

| goroutine | Container + VM parallel provisioning | Thread pool + complex synchronization |

| channel | Error collection, service coordination | Callback hell or mutex noise |

| os/exec | virsh, brctl, ip command management | Shell script wrapping / subprocess |

| sync | Thread-safe IP pool, error list | Lock library + race debugging |

| crypto | WireGuard X25519 key generation | OpenSSL dependency / CGo |

Key Design Decisions

- Graceful fallback — no KVM → use QEMU, no cloud-init → skip CD-ROM

- Think in uint32 — IPv4 arithmetic becomes integer comparison

- Dual strategy — adapt data structure to input size (eager vs. lazy)

- Error chain — add context at each layer with

%w - Fan-out + channel — Go's concurrency primitives combine naturally

- Test concurrency — 100 goroutines + race detector = confidence

Next Steps

- Context-based cancellation and graceful shutdown

- gRPC API for remote orchestration

- Prometheus metrics (container count, IP usage, …)

- VM snapshot and restore

- WireGuard mesh for multi-server support

Questions?

Q & A

Ahmet Türkmen

linkedin.com/in/mrturkmen

github.com/mrtrkmn

Systems Development Engineer — AWS

Thank you! 🙏

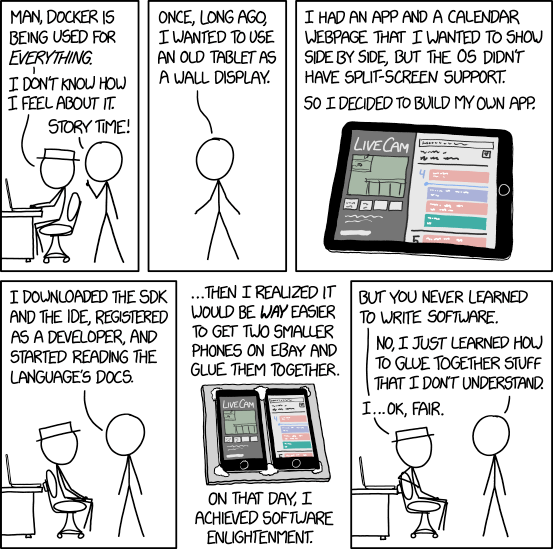

xkcd.com/1988 — "Containers"